The rapid advancement of multimodal large models has revolutionized artificial intelligence, enabling systems to process and understand diverse data types—text, images, audio, and video—simultaneously. However, deploying these sophisticated models in real-world applications remains a significant challenge due to their enormous computational demands. As industries increasingly seek to integrate AI into edge devices, IoT systems, and mobile platforms, the need for lightweight deployment solutions has become more pressing than ever.

Why Lightweight Deployment Matters

The sheer size of multimodal models, often comprising billions of parameters, makes them impractical for resource-constrained environments. Traditional deployment approaches require high-end GPUs or cloud-based infrastructure, which are costly, energy-intensive, and introduce latency concerns. Lightweight deployment strategies aim to bridge this gap by optimizing models for efficiency without sacrificing their core capabilities. This shift is critical for applications like real-time translation, autonomous vehicles, and augmented reality, where responsiveness and offline functionality are paramount.

Recent breakthroughs in model compression techniques have opened new possibilities. Pruning redundant neurons, quantizing weights to lower precision, and distilling knowledge from larger models into smaller ones are proving effective. These methods can reduce model sizes by over 60% while maintaining competitive performance. For instance, a pruned version of a vision-language model might retain 90% of its accuracy despite being half the size of the original.

Hardware-Software Co-Design Approaches

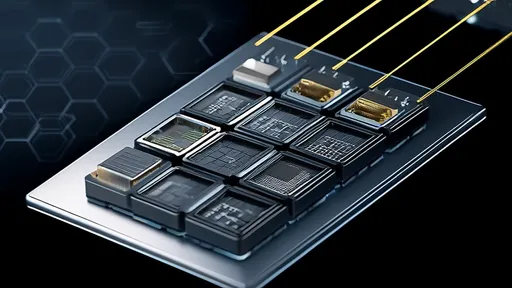

Beyond algorithmic optimizations, successful lightweight deployment relies on tight integration between software and hardware. Chip manufacturers are now designing specialized accelerators tailored for efficient multimodal inference. Neuromorphic chips, which mimic the brain's energy-efficient processing, show particular promise. When paired with optimized model architectures, these chips can deliver dramatic improvements in speed and power consumption.

Software frameworks are evolving to support these advancements. New libraries enable automatic conversion of large models into mobile-friendly formats while preserving multimodal functionality. Developers can now access tools that dynamically adjust model complexity based on available resources—a crucial feature for maintaining consistent user experiences across diverse devices.

The implications for industry are profound. Healthcare providers can deploy diagnostic AI on portable devices in remote areas. Retailers can implement sophisticated visual search without expensive infrastructure. Educational apps can offer personalized, multimodal learning experiences on budget tablets. These applications were previously unimaginable without cloud dependence.

Overcoming Deployment Challenges

Despite progress, significant hurdles remain. Maintaining model robustness after compression requires careful balancing. Some lightweight techniques may introduce biases or reduce performance on rare but critical edge cases. There's also the challenge of keeping models updatable after deployment—a necessity in fast-evolving domains like social media analysis.

Privacy concerns add another layer of complexity. While local deployment enhances data security by minimizing cloud transmission, ensuring comprehensive on-device protection demands additional innovation. Researchers are exploring federated learning approaches where lightweight models can improve through distributed learning while keeping sensitive data localized.

The environmental impact of AI has also come under scrutiny. Lightweight models not only make deployment feasible but also sustainable. A recent study showed that optimized multimodal models could reduce carbon emissions by up to 80% compared to their full-sized counterparts when deployed at scale. This sustainability angle is driving increased investment from environmentally conscious organizations.

Future Directions and Industry Adoption

Looking ahead, the field is moving toward more adaptive solutions. Instead of static compressed models, we're seeing the rise of dynamic architectures that can reconfigure themselves based on task requirements and available resources. This flexibility will be crucial as multimodal AI expands into unpredictable real-world environments.

Industry adoption patterns reveal interesting trends. While tech giants were early adopters, small and medium enterprises are now leading innovation in niche applications. A boutique marketing firm might develop a lightweight model for analyzing social media engagement across text and images, while a manufacturing startup could create a compact visual-auditory system for quality control.

The democratization of these technologies is accelerating. Open-source initiatives are making lightweight deployment tools accessible to individual developers and researchers. This grassroots innovation is yielding creative solutions that challenge conventional wisdom about what's possible with constrained resources.

As the technology matures, we're likely to see standardization efforts emerge. Common benchmarks for evaluating lightweight multimodal models would help compare approaches objectively. The community also needs best practices for deciding when compression is appropriate and how to validate compressed models thoroughly.

The journey toward efficient multimodal deployment is just beginning. What started as a necessity for mobile applications has evolved into a broader rethinking of how we design and implement AI systems. The solutions emerging today will shape the next decade of intelligent applications, making advanced AI capabilities available wherever they're needed—not just where infrastructure permits.

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025