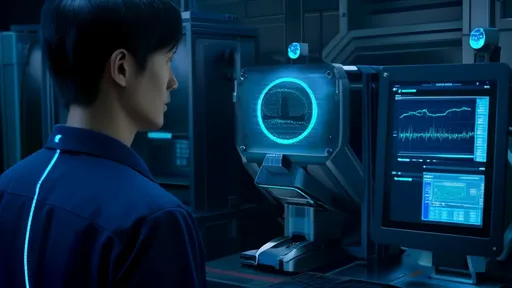

In a quiet lab at the intersection of neuroscience and computer graphics, researchers are wiring actors up to machines that look like they belong in a cyberpunk novel. Electrodes cling to facial muscles like high-tech temporary tattoos, capturing the subtle electrical whispers of movement before it happens. This isn't medical research - it's the cutting edge of animated storytelling, where electromyography (EMG) is becoming the invisible puppeteer behind some of the most nuanced digital performances ever created.

The technology works by detecting the electrical potentials generated by muscle fibers when they contract. Unlike traditional motion capture that requires bulky suits or facial marker arrays, EMG sensors can be discreetly placed beneath the chin, along the jawline, or near the temples. When the performer even thinks about smiling, the sensors pick up the neural commands before the physical movement occurs, allowing for astonishingly responsive digital avatars that mirror intent rather than just motion.

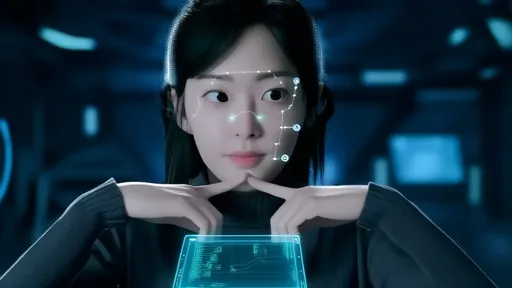

What makes this approach revolutionary isn't just the technical achievement, but the philosophical implications. For decades, animators have struggled with the "uncanny valley" - that eerie discomfort when almost-but-not-quite-human digital faces move in ways that feel subtly wrong. EMG-driven animation bypasses this by capturing the authentic neuromuscular signatures that make human expressions feel human. The resulting performances carry the involuntary micro-expressions and emotional leakage that method actors spend years perfecting.

Early adopters include video game studios pushing for more immersive NPC interactions and filmmakers creating digital doubles for sensitive posthumous performances. One notable application allowed a paralyzed actor to control a digital character's face using only the residual muscle activity around his eyes. The emotional impact was profound - technology originally developed for medical prosthetics gave him back the full range of theatrical expression he thought he'd lost forever.

The challenges remain significant. Facial EMG signals are orders of magnitude weaker than those from larger muscle groups, requiring exquisitely sensitive amplifiers and advanced noise filtration algorithms. Researchers are developing machine learning models that can distinguish between, say, a genuine smile and a grimace based on the unique activation patterns of the zygomaticus major versus the orbicularis oris muscles. It's a level of biological nuance that even seasoned animators struggle to replicate manually.

Perhaps most intriguing is how this technology is changing our understanding of expression itself. By analyzing the EMG patterns across diverse populations, scientists have identified universal muscular signatures for basic emotions that transcend cultural display rules. The technology doesn't just capture how we move our faces - it's revealing how we embody emotion at a fundamental physiological level. This has implications far beyond entertainment, from improving affective computing to developing new tools for diagnosing neurological conditions.

As the hardware shrinks and the algorithms improve, we're approaching a future where performers might control digital characters as naturally as they move their own bodies. The implications for virtual production are staggering - imagine an actor performing live in VR while their facial expressions drive an animated character in real-time, with no post-production cleanup required. It's a level of immediacy that could fundamentally change how we create and consume animated content.

The technology isn't without its ethical quandaries. The same systems that can restore expressive ability could also be used to create deepfake performances without an actor's consent. There are also concerns about emotional labor - if an actor's slightest micro-expressions become part of a digital asset that can be reused indefinitely, who owns that biological performance data? These are questions the industry is only beginning to grapple with as the line between performer and performance becomes increasingly blurred.

What began as a niche research project is rapidly becoming a transformative force in digital media. From video games to virtual influencers, from therapeutic applications to next-generation human-computer interfaces, EMG-driven facial animation represents more than just a new technical toolset. It's a fundamental reimagining of how we bridge the gap between human emotion and digital representation - one muscle twitch at a time.

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025

By /Jul 29, 2025